Shipping AI features in a product used by hundreds of millions of people is a different problem than shipping AI features in a startup. The latency budgets are tighter, the failure modes have to be softer, and the user expectations are — counterintuitively — both higher and more forgiving at the same time.

Here are a few things I've learned working on Copilot inside Microsoft 365.

Orchestration matters - the model is not the product

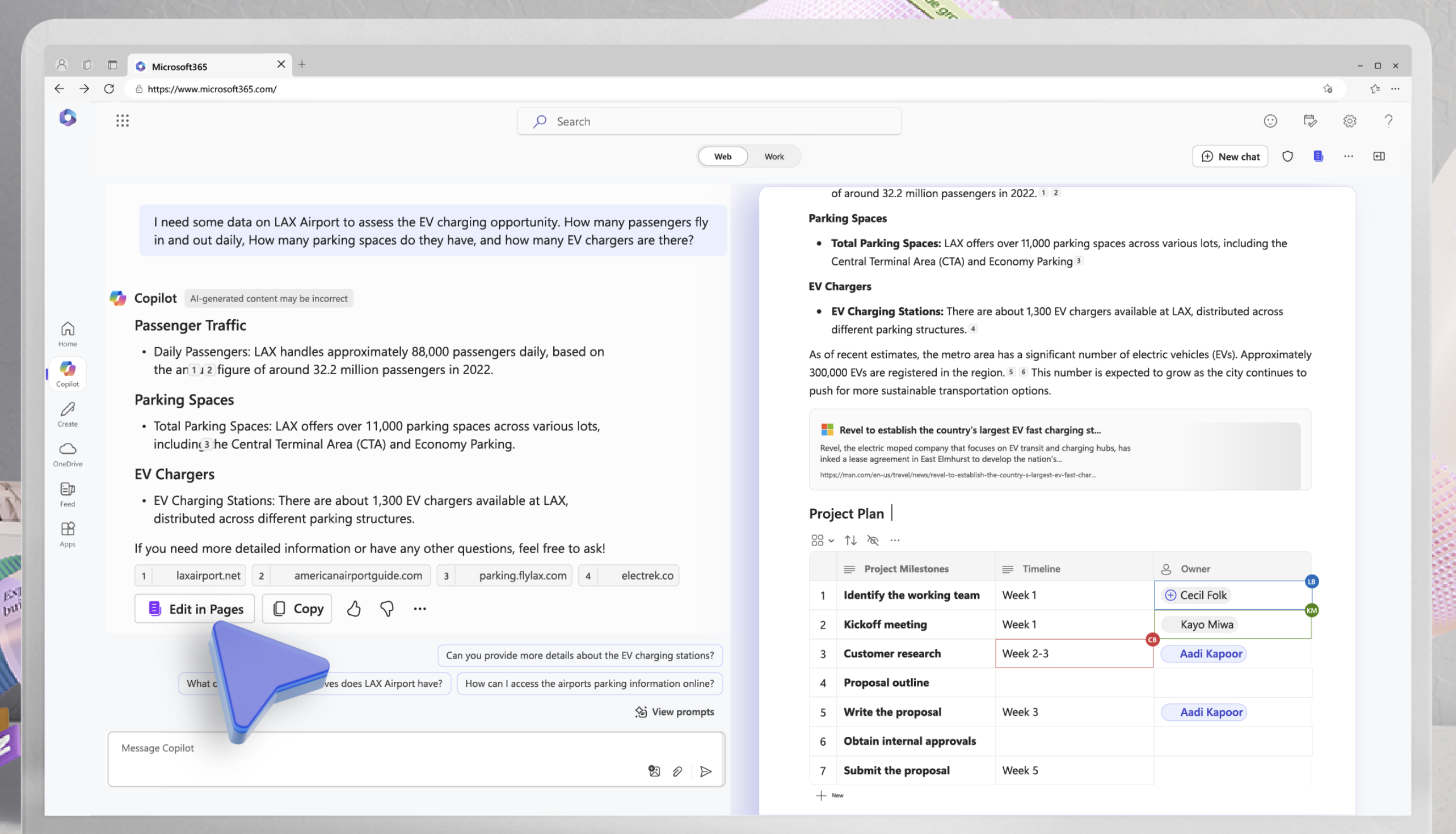

One thing that surprised me was how much of the engineering effort went into building and maintaining the orchestration around the model. The raw model is just a tool — the real product is the system you build around it. Behind the Copilot UI that most enterprise users see there is a complex orchestration layer that handles all kinds of things - from managing sessions, to handling dynamic prompting, tool calling, and all the users enterprise content.

It's only after dealing with a large orchestration system that you understand where the value of the product is at. The model is a critical component, but it's not the whole story. The orchestration layer is where you can add a lot of value and differentiate your product from competition.

It is when changing the orchestration that you understand the amount of compliance that a company as big as Microsoft has. There are no shortcuts to ship things faster. Everything needs to work and respect the established privacy and security contracts.

Streaming is not optional

If your AI feature takes more than a second to produce output, you need to stream. Users tolerate waiting while something is happening far better than they tolerate a blank state followed by a sudden content dump.

The implementation complexity is real — you need to handle partial JSON, manage cancellation, and think about what "loading" means at the component level. But it's table stakes for any production LLM feature.

For the pages scenario, streaming complexities increase even more. Pages are an app of its own. They have its own DDS and its own client-server architecture. To stream content into a page, we had to build a custom streaming solution that could integrate with the page's architecture and handle all the edge cases of partial updates, error handling, and synchronization with the page's state.

Latency compounds

Every network hop, every token of context, every post-processing step you add compounds your end-to-end latency. I've seen 200ms added by a simple string transformation that ran synchronously on the critical path.

Profile your full pipeline end-to-end before you start optimizing individual pieces. The bottleneck is usually not where you expect it.